Turn Your Data Into Trusted Decisions — and AI That Works

Trusted data foundations, governance, and AI-ready architectures across Azure, Microsoft Fabric, Lakehouse patterns, Snowflake, Databricks and Purview.

Trusted Across Public Sector and Regulated Environments

Data & AI Services

I help organisations design, build, and govern data and AI capabilities that work reliably in production. My services focus on strong data foundations, clear decision-making, and responsible AI — bridging strategy, architecture, and execution.

AI Strategy & Operating Models

Define how AI creates value in your organisation — from vision and prioritisation to governance, operating models, and enterprise AI readiness. .

Analytics & Decision Intelligence

Design analytics platforms and semantic models that move beyond dashboards — enabling trusted metrics, consistent insights, and confident decision-making.

Advisory, Enablement & Training

Targeted workshops and advisory sessions for data leaders, engineers, and analysts — focused on real architectures, governance, and production realities.

AI Architecture & Implementation

Design and build AI-enabled systems — including agentic workflows, MLOps, and secure integration with enterprise data platforms — with a focus on reliability, control, and scale.

What I Help You Achieve

Capabilities that allow your organisation to trust its data, act with confidence, and deploy AI responsibly at scale.

Agentic AI Engineering (Azure, Fabric, Snowflake Cortex, Databricks Mosaic AI)

Data Management & Data Modelling (Fabric, Databricks, Snowflake, SQL)

Data Engineering & Integration (Fabric, Databricks, Snowflake)

Real-Time Context & Event Streaming

AI Data Security & Compliance

Business Intelligence & Advanced Analytics (Power BI, Excel, Power Pivot)

Portfolio

Showcasing production-grade architectures across agentic AI, data platforms, governance, and real-time decision systems.

These examples illustrate how I design safe, governed, and scalable AI capabilities that work reliably in complex enterprise and regulated environments.

Enterprise Agentic AI requires architecture, governance, quality, and time-aware decisioning — together.

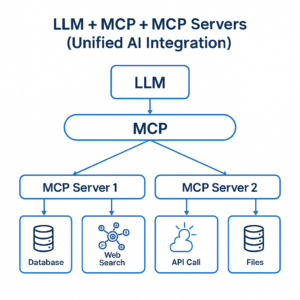

Unified Agentic AI Architecture (MCP-Based)

Secure integration of LLMs with enterprise tools using governed MCP servers

This pattern establishes a controlled execution layer between AI models and enterprise tools — enabling auditability, permissioning, and safe tool orchestration across data, APIs, and operational systems.

LLMs · MCP · Tool orchestration · Enterprise integration

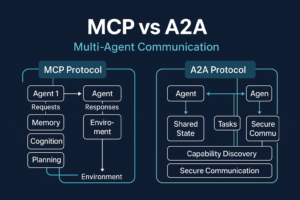

Multi-Agent Communication Models (MCP vs A2A)

Designing scalable, auditable agent-to-agent and tool-based AI systems

By contrasting MCP-based orchestration with agent-to-agent (A2A) patterns, this approach clarifies when agents should coordinate through governed tools versus autonomous peer interaction — balancing flexibility with control.

Agentic AI · Protocol design · Governance patterns

Data Quality Framework for Agentic AI

Ensuring trustworthy, explainable, and safe AI through multi-layer data quality controls

The framework enforces semantic, structural, and operational quality across ingestion, transformation, and inference — ensuring agents act on reliable context, not corrupted or ambiguous data.

Semantic quality · Observability · AI governance

Real-Time Decision Architecture

Enabling low-latency, context-aware decisions through streaming-first data design

By processing events as they occur and maintaining state across time, these architectures eliminate blind spots caused by batch delays, reprocessing, or fragmented pipelines — enabling automation, alerting, and AI inference on live operational signals.

Streaming · Event-driven systems · Real-time analytics

Event-Driven & Real-Time Decision Architecture

By combining streaming ingestion, stateful processing, and contextual enrichment, these architectures ensure decisions are made as events happen, not minutes or hours later.

This approach enables organisations to react to operational signals, behavioural changes, and system events immediately — powering automation, alerting, and AI inference on live data rather than stale snapshots.

Unlike batch-centric pipelines, event-driven systems preserve continuity and context across time, eliminating blind spots caused by delayed joins, recomputation, or disconnected processing stages.

Streaming • Event-Driven Systems • Stateful Processing • Real-Time Analytics • Decision Automation

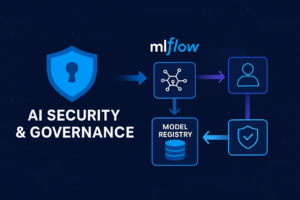

AI Security, Governance & Model Control

I design governance and security controls that span the full AI lifecycle — from model development and experimentation through deployment, monitoring, and retirement.

This includes model versioning, lineage, access control, and auditability, ensuring every prediction can be traced back to its data, code, and configuration.

By embedding governance directly into MLOps pipelines, organisations can deploy AI systems with confidence — meeting regulatory, ethical, and operational requirements without slowing delivery or innovation

MLOps · MLflow · Model governance · Security controls

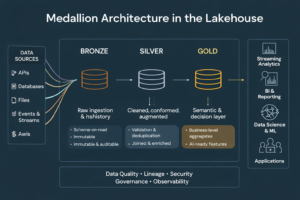

Medallion Architecture — Decision-Ready Lakehouse

I design lakehouse architectures using the Medallion pattern to transform raw, heterogeneous data into trusted, decision-ready assets.

Data flows deliberately from raw ingestion (Bronze) through cleaned and conformed datasets (Silver) to business-aligned, semantic models (Gold) — ensuring data quality, lineage, and consistency at every stage.

This approach enables reliable analytics, AI workloads, and reporting without data duplication, brittle pipelines, or uncontrolled transformations — making the lakehouse a governed foundation, not a data swamp.

Bronze–Silver–Gold • Lakehouse • Data Quality • Lineage • Semantic Modelling

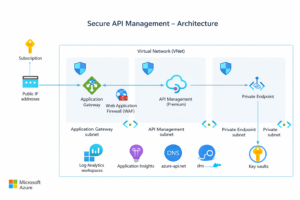

Secure API Management for Regulated Data Platforms

I design secure API management architectures for regulated and high-risk environments, ensuring data platforms are accessible without compromising security, compliance, or operational control.

By combining private networking, identity-based access, policy enforcement, and observability, these architectures enable controlled data ingestion and service exposure across internal teams, partners, and systems.

This pattern is critical for organisations operating in public sector, healthcare, and enterprise environments where security, auditability, and reliability are non-negotiable.

API Management • Zero Trust • Private Networking • Identity & Access • Compliance

Ready to Build Production-Ready Data & AI — Not Another POC?

Let’s design secure, governed data and AI platforms that deliver real decisions in production —

not dashboards, experiments, or stalled pilots.

Trusted by public sector and regulated organisations where reliability, auditability, and governance are non-negotiable

Ahmed · Principal Data & AI Engineer

Principal Data Engineer & AI Advisor

I help organisations turn complex, fragmented data landscapes into trusted, decision-ready intelligence and AI systems that work reliably in production.

My background spans science, health informatics, and large-scale data engineering, which shapes how I approach AI: rigour first, governance by design, and measurable outcomes over hype.

I work at the intersection of data engineering, analytics, and AI strategy, designing platforms that support analytics, real-time decisions, and AI at scale — securely, auditable by default, and built for long-term sustainability.

How I create impact

Designing production-grade data & AI platforms (not demos)

Embedding data quality, lineage, and governance into architecture

Delivering AI-ready foundations for regulated and public-sector environments

Bridging data engineering, governance, and production-ready AI

Areas of Expertise

Agentic AI & MCP — safe, governed agent and tool-based AI systems

Lakehouse & Modern Data Platforms — Fabric, Databricks, Snowflake, hybrid

Data Quality & Governance — lineage, semantic quality, trust at scale

Analytics & Decision Intelligence — metrics, semantic models, decisions

Security & Compliance — regulated data, privacy, access control